The Ethics of AI in Marketing: A Guide to Responsible & Transparent Practices

For medium-to-high-level advertising executives, CMOs, VPs, and Directors who are required to weigh new ideas against corporate hazards, the issue is not whether artificial intelligence should be utilized, but rather how it can be implemented accountably.

A functional system for accomplishing precisely that is offered by this overview, which includes pinpointing the primary moral vulnerabilities in AI-powered advertising, setting up control systems, and integrating moral rules into all phases of the AI lifespan.

Primary Moral Issues in AI-Powered Advertising

Information Security and Buyer Confidence

Because of their characteristics, AI advertising platforms require an abundance of data. In order to achieve the precision of forecasting that advertisers seek, large sets of action indicators, demographic characteristics, as well as buying records and web browsing habits, are required by the platforms.

But concerns about artificial intelligence acquiring their private information are expressed in the minds of 75% consumers, and the likelihood that AI makes conclusions about them with no consciousness is the main concern of 60% of buyers (Salesforce 2023).

The moral duty in this situation is obvious, as businesses are required to exceed basic lawful adherence and view information management as a fundamental corporate belief. This requires gathering solely the information that is actually required for a specific goal, while being clear with buyers regarding what is gathered and the reasons behind it, in addition to acquiring genuine, educated agreement and offering simple methods for individuals to check, amend, or erase their information.

When confidential private details are secretly distributed to external promoters by medical applications, which is a trend recorded by The Wall Street Journal, rules are not simply broken; the unspoken agreement of confidence that supports all buyer interactions is completely destroyed.

Computational Prejudice and Equality

For advertisers, computational prejudice leads to legal vulnerability under anti-prejudice rules, as well as image hazards when inequalities are exposed to the masses, and, most crucially, actual damage to the individuals who are subjected to prejudiced judgments.

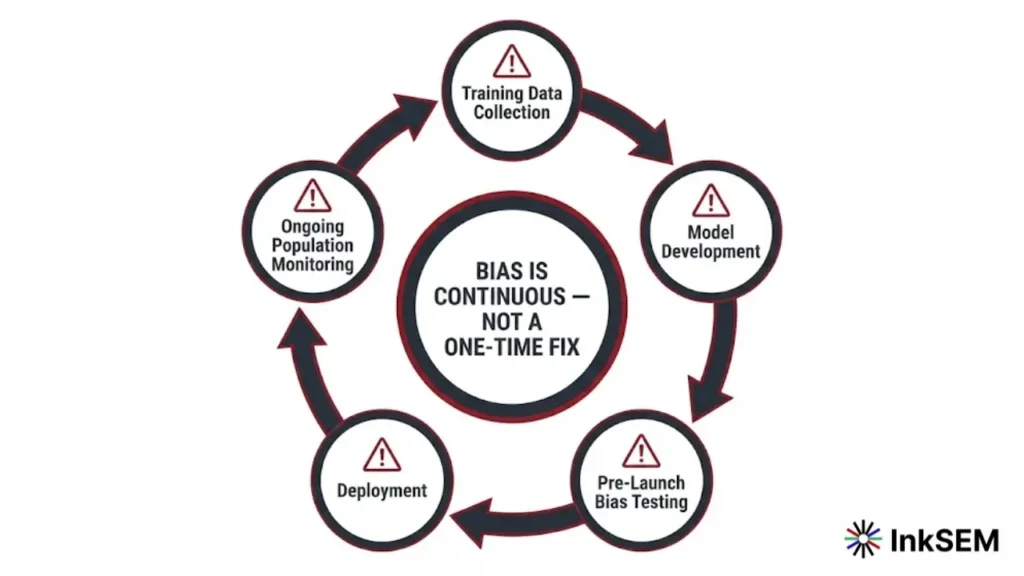

Fixing prejudice is not a single technological repair; rather, continuous watchfulness is required by it throughout the whole model lifespan, which spans from gathering training information to implementation and tracking.

Deceptive Prompting

A variety of psychological strategies that are designed to influence buyer choices is offered to marketers by way of the discipline of behavioral sciences. This is the case with limited-access indicators like loss framing and community-based validation exchange triggers. These instruments are augmented by AI because it can provide immediate personalization and adjust prompts to specific brain weaknesses at a larger scale.

The line of morality that separates convincing from deceit isn’t always evident, an objective test is if a change helps consumers in making choices that are in line with their true values and preferences or if it exploits mental weaknesses to make decisions that may later be regrettable.

The most obvious violations of this rule are represented by deceitful designs and falsely increased timers as well as hidden cancellation strategies and checkboxes that were previously chosen for agreement. A value-driven approach to customization that meets buyer demands rather than simply manufacturing conformity is being embraced by forward-thinking managers, especially through channels that support direct, consent-led engagement such as WhatsApp through personalized WhatsApp customer journeys.

Clarity and Interpretation

Technically speaking in terms of technology, many effective AI models work like black boxes. The outputs produced are generated by AI models, and their internal logic is difficult or even impossible to understand. The most grave ethical problems in advertising arise from a lack of understanding.

A true agreement cannot be given by buyers to make choices not accepted by them. Systems that aren’t clear can’t be analyzed by compliance professionals. Furthermore, in the event of harm resulting from AI-powered actions, such as the denial of a mortgage or an untrue advertisement, there is no clear chain of logic isn’t present that can be investigated or rectified.

This problem is addressed by the rapidly developing field of Explainable AI (XAI) using techniques that allow models to be easier to understand. This includes significant ranking of traits, alternative scenarios clarifications, and model-independent understanding tools. Integrating XAI into marketing AI networks is becoming a requirement as well as an ethical one.

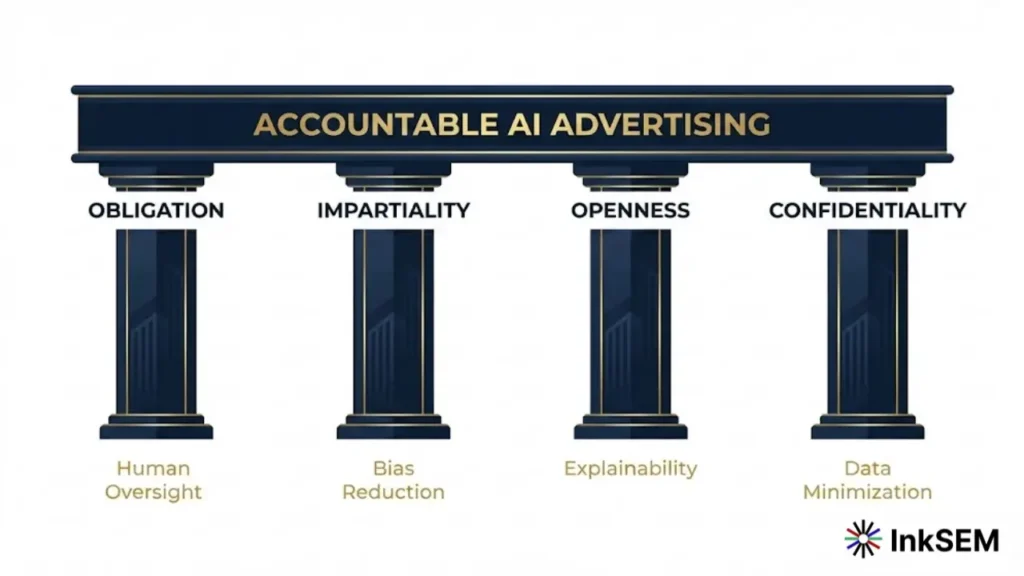

Foundations of Accountable Artificial Intelligence Advertising

Four connected foundations support accountable AI advertising, and each one needs to be deliberately developed instead of taken for granted.

Obligation

A human director, like an administrator or a group that is responsible for the results and impacts, must be assigned to each AI network used in advertising. Control frameworks that are clearly defined are required because of an obligation. This includes explicit choice authority along with escalation routes and mechanisms for parties to raise concerns.

AI Ethics Boards that include participants from legal, advertising, and data analysis, adhesion, and, most crucially, the buyer protection departments are being formulated by leading companies. The morality of the company’s AI rules is overseen by these boards. They also have the authority to stop or cancel AI projects that violate them is controlled by these boards.

Impartiality

Purposeful steps across the entire model lifespan are required to attain impartiality in AI-powered advertising. It starts with educational information, which must consist of varied, comprehensive data collections gathered with mindfulness regarding past prejudices. It proceeds into model creation, where prejudice-reduction strategies like adjusting weights, adversarial correction, and equality restrictions can be utilized.

Furthermore, it continues into implementation and tracking. Where model results across population groups are followed by continuous evaluations to spot new unequal impacts. Importantly, impartiality is impossible to achieve without varied groups, because uniform data analysis departments are less likely to spot the overlooked areas that generate prejudiced networks.

Openness

Openness in AI advertising functions on several tiers. Keeping records of model designs, training data origins, and functioning features is what it signifies at the system level. At the buyer level, it signifies clear notifications, preferably provided exactly when needed at the moment of an AI-powered transaction, regarding the time and manner in which AI is utilized.

The logic behind AI choices is made understandable to non-expert involved parties by Explainable AI tools, which bolsters openness. From a legislative standpoint, openness is not a choice anymore, because transparency requirements for a wide variety of AI uses, including those employed in advertising, are demanded by the EU AI Act.

Confidentiality

The concept of information minimization is the foundation of confidentiality-honoring AI advertising, meaning you should gather the lowest quantity of private information required to accomplish a valid goal. It is supported by solid security measures, clear storage regulations, and foremost, the genuine power of the user.

The information that is stored about them must be clear to purchasers. And they should be able to fix mistakes, rescind agreements or withdraw from categorization based on AI without penalty. The top level of security is exemplified by the concept of confidentiality through the creation of privacy protections, which are included in AI networks from the very beginning and not added later.

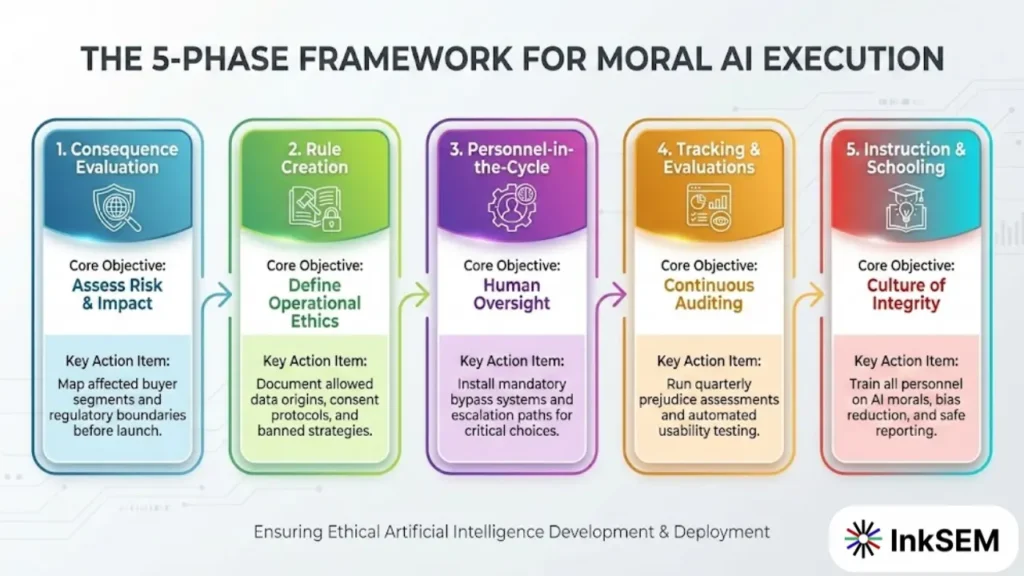

5 Step Structure for Moral Artificial Intelligence Execution

A structured execution structure is required to transition from concepts to application. A repeatable method for utilizing AI accountability is provided to advertising managers by the subsequent five-stage procedure.

Phase 1: Consequence Evaluation

A thorough evaluation of the consequences, which evaluates potential hazards across a variety of areas, should be conducted prior to the use of the AI advertisement network. Learn which buyer segments will be affected by the results of the network and how. The legislative framework that applies to the present situation includes GDPR, EU AI Act, FTC guidelines, and other industry-specific regulations.

Analyze the effects on the community from the network on an enormous scale. If these AI recommendations are embraced by a multitude of buyers, what are the overall outcomes? Your findings must be recorded, and apprehension must be secured from lawful adherence, and management must be in place prior to continuing.

Uses with high risk, like concerns with confidential personal information, important choices, or vulnerable groups, require more thorough examination and may require an independent moral evaluation.

Phase 2: Rule and Directive Creation

Rule and Directive Creation. The operational rules that provide workers with clear instructions should be formulated based on your moral beliefs. Data origins that are permitted and gathering methods agreements, benchmarks for agreement and withdrawal methods, banned situations and strategies that deceive prejudicial checking requirements.

And acceptable functioning levels across different population segments, procedures for handling crises to be used when AI networks cause damage, and records-keeping and evaluation essential logs must be part of the comprehensive AI advertising rules book. Rules should be able to be modified and are reviewed frequently as legislation, machinery, and consumer expectations change.

Phase 3: Personnel-in-the-Cycle Networks

Figuring out where human reasoning has to stay in the cycle is one of the most vital structural choices in AI advertising. For critical choices, like tailored pricing, loan-related deals, and focusing on susceptible groups. Suggestions for human assessment should be presented by AI instead of it functioning independently.

Explicit bypass systems that permit human assessors to refuse AI suggestions without difficulty need to be constructed. Escalation procedures for unique situations that exist outside the network’s education scope must be set up. Guaranteeing that significant choices keep true human responsibility is the objective, rather than restricting the usefulness of AI.

Phase 4: Tracking and Evaluations

Ethical AI requires continuous monitoring, not just a one-time certification. Implement real-time tracking across diverse populations to quickly flag irregularities. Tools like Loop11 that use AI browser agents to simulate user experiences add a vital behavioral validation layer, uncovering friction points that traditional analytics miss.

Additionally, conduct bias assessments quarterly for high-frequency models and schedule annual independent audits for critical applications. Sharing these findings with leadership and the public is essential, as transparent AI ethics are increasingly vital to stakeholders.

Phase 5: Instruction and Schooling

A staff that is able to comprehend and is committed to moral ideas is needed through moral AI, along with structural safeguards. A multi-level instructional program has to be designed, with fundamental AI morals training for all personnel in advertising. And extensive training on identifying and reducing prejudice for employees who create and use AI networks, and advanced morals-control training for those who create guidelines and oversee AI Morals Boards.

It is essential to collaborate with outside experts, academic groups, and industry associations. To bring new perspectives and implement the most effective methods in your organization. Techniques to allow employees to express concerns of morality regarding AI networks without fear of reprisal should be in place.

Merging Artificial Intelligence Morals with Corporate Duty and Image Plan

For progressive companies, artificial intelligence morals act as a tactical benefit, rather than a rule-following hassle separate from image planning. Companies are increasingly being judged by buyers, staff, and funders based on their moral utilization of machinery. Organizations that show real dedication to accountable AI gain confidence bonuses that convert into faithfulness, support, and toughness when disputes occur.

AI morals projects should be matched with your wider corporate social responsibility (CSR) structure. By establishing quantifiable objectives, for instance, lowering population inequalities in AI-powered results by a specific proportion within a designated timeframe. And developments should be communicated publicly alongside other ESG figures.

Participate with sector groups creating common benchmarks for moral AI in advertising. Like the Partnership on AI and the Digital Advertising Alliance’s AI accountability programs. Putting together a Consumer AI Advisory Board that grants buyers immediate influence over how AI is utilized in a manner that impacts them ought to be considered.

The businesses that will succeed in an AI-driven economy are not the ones that utilize AI the most forcefully. But rather the ones that utilize it the most accountably, which establishes the lasting confidence that maintains long-term buyer connections.

Legislative Environment and Sector Benchmarks

The legislative setting for AI in advertising is altering swiftly, and the rate of transformation is speeding up. Compliance cannot be handled as a delayed metric by advertising managers. Legislative changes must be aggressively followed by them, and flexible control systems that can react quickly to new necessities must be constructed.

| GDPR | European Union | Direct agreement, information minimization, deletion entitlement, violation warning | Maximum of 4% total yearly income or €20M |

| EU AI Act | European Union | Hazard-focused sorting, openness duties, personnel supervision for elevated-hazard AI | Maximum of 6% total yearly income for the banned AI |

| FTC AI Guidelines | United States | Honest statements, prejudice stopping, buyer defense, and information safety | Lawful charges, preventative orders, and compensation |

| CCPA / CPRA | California, USA | Knowledge entitlement, withdrawal from information vending, anti-prejudice, automated choice restrictions | Maximum of $7,500 for each deliberate breach |

Outside of official legislation, the standard for acceptable AI advertising habits is being elevated by sector self-governing structures. AI-focused instructions for their participants have been released by both the Interactive Advertising Bureau (IAB) and the Digital Advertising Alliance (DAA).

Their personal AI morals rules have been put into place by massive promotional networks. Including Google, Meta, and Amazon, which essentially establish functional benchmarks for companies working on their networks.

Constructing AI advertising networks to satisfy the strictest relevant benchmarks, normally GDPR and the EU AI Act for worldwide functioning, is the sensible method, instead of developing for the lowest regional necessities that might soon be replaced.

Also Read: Hidden Risks of Using AI in Digital Marketing

Computational Prejudice in the Field of Commercial Concentration

AI advertising networks, which provide various options to different groups of people in ways. That reflect and exacerbate current inequality, are part of a pattern that is frequently seen across a variety of networks. Job advertisements for top positions have been found to be delivered to men at a much higher frequency than to women of comparable ability.

Property commercials have been aired with varying frequency to people depending on their ethnicity. Financial item commercials have demonstrated patterns of population that are reflected in historically prejudiced borrowing practices.

These outcomes are not always a result of obvious prejudiced motives. They emerge from AI networks that maximize interactions or sales figures using educational data that includes historical inequalities. Continuously evaluating prejudice rather than single-moment pre-launch screening is essential to this end. Inequalities can show up gradually as the model’s actions change with the changing information structure.

Final Thoughts and Execution Measures

The ethics associated with AI in advertising don’t constitute a philosophical issue. The concrete effects on buyer well-being, corporate image, customer satisfaction, compliance with legislation. And long-term financial performance are a part of this practical hurdle. The benefit is that responsible AI advertising is attainable, and the money put in its proper implementation will yield enormous returns for confidence in the buyer as well as the company’s toughness and steady growth.

A clear and well-defined path to take is given to advertising executives through the framework described in this outline. Which is built on the four principles of duty, impartiality, openness, and confidentiality. And is implemented by consequence evaluation and rule-making, as well as human supervision, tracking, and guidance. The functional starting point is offered through the next five actions. Regardless of whether you’re creating a new AI advertising initiative or looking to evaluate the current version.

Execution Measures for Advertising Managers:

- Establish an AI Ethics Board as your initial and most fundamental move. Gather a multi-departmental team, containing advertising, legal, data analysis, adherence, and buyer protection. And grant it explicit power to define benchmarks, evaluate AI projects, and raise issues to upper management. All other moral AI operations sit on unstable ground without this control base.

- Execute an instant review of your existing AI advertising networks. For every network, record the data origins utilized, the choices the AI sways, the population groups impacted, and the oversight structures installed. Determine your most elevated-hazard uses and rank them for greater examination.

- Perform mandated prejudice evaluations as a condition for AI implementation and as a continuous functional necessity. Establish tolerable functioning levels across population segments prior to implementation, and set up an observation rhythm that will spot shifts before damage is generated.

- Produce and release a buyer-focused AI openness statement. In simple terms, detail the way AI is utilized in your advertising, what information shapes it, and how buyers are able to employ dominance. Ensure this declaration is easily reachable, rather than hidden in a confidentiality rulebook that nobody reviews.

- Fund instruction and the workforce environment. Moral AI cannot be maintained purely by control structures. A staff that comprehends the consequences and is enabled to bring up issues is necessary. Integrate AI morals into orientation, into continuous professional education, and into the measurements by which advertising effectiveness is judged.

The period of AI advertising without obligation is coming to a close. Driven by legislation, buyer hopes, and the hard lessons from major public breakdowns. The executives who adopt moral AI as an edge over rivals rather than a restriction will establish the upcoming era of dependable, highly effective advertising companies.